Taste Driven Development

LLMs are incredible at writing code, but writing code is only a tiny fraction of building software that people want. Everything else, people call “taste”.

What does taste mean? No one knows what it means, but it’s provocative. It gets the people going. To align on a definition of taste we’ll look at good taste, and show how engineering taste can enable your coding agents to be more useful. Before we can make LLMs tasteful, let’s see how their lack of taste manifests.

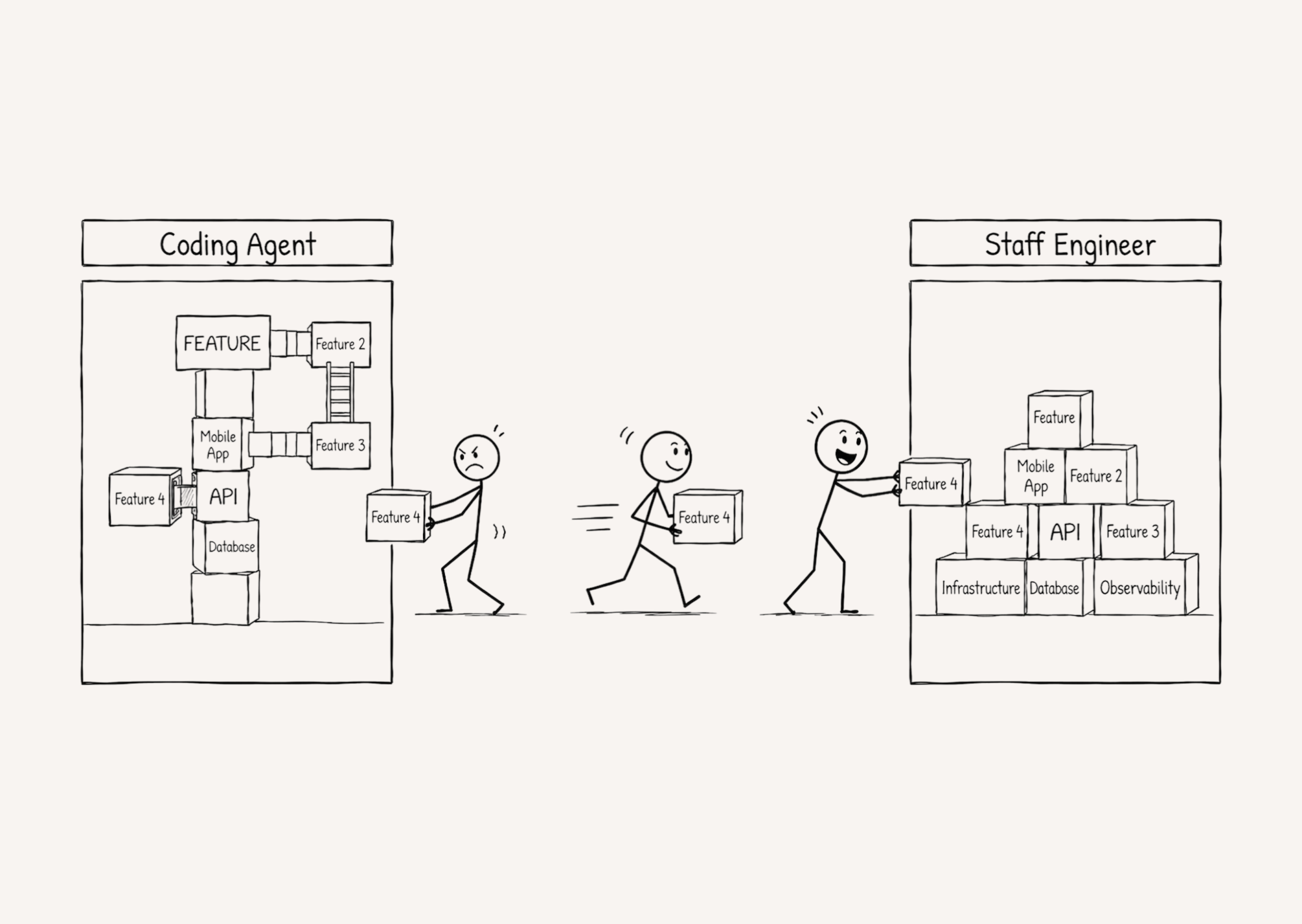

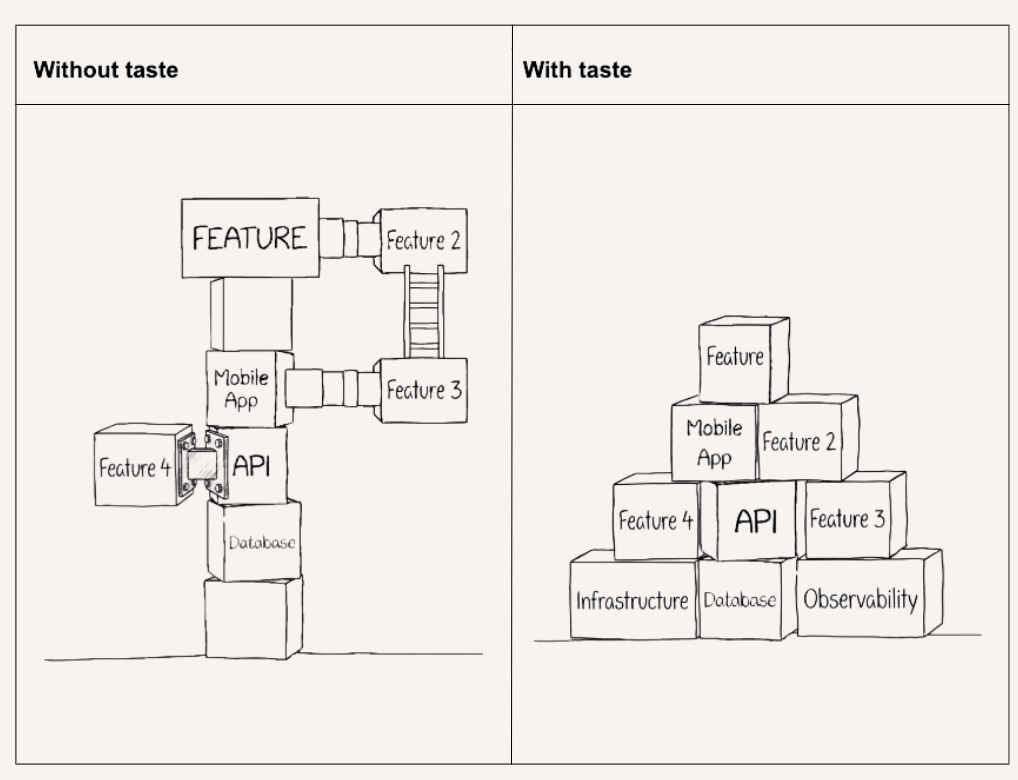

What LLMs lack in taste, they make up for with tenacity. They are exceedingly great at writing code that does the thing you asked for, but that excellence means they rarely stop to ask if there’s a simpler or more scalable way.

They’re great at arranging blocks to build the feature you ask it for.

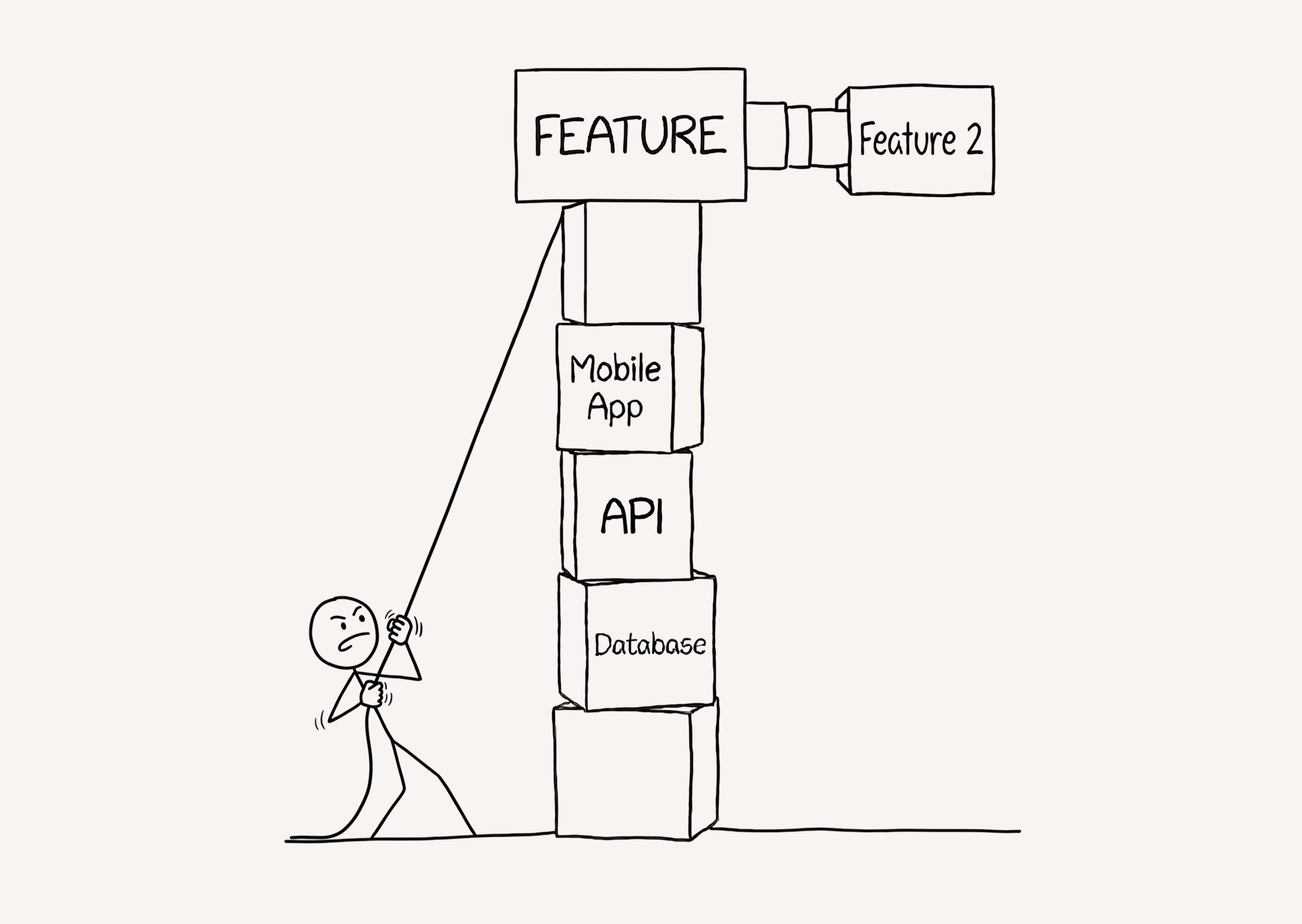

They’re also excellent at adding a second feature onto what you already have.

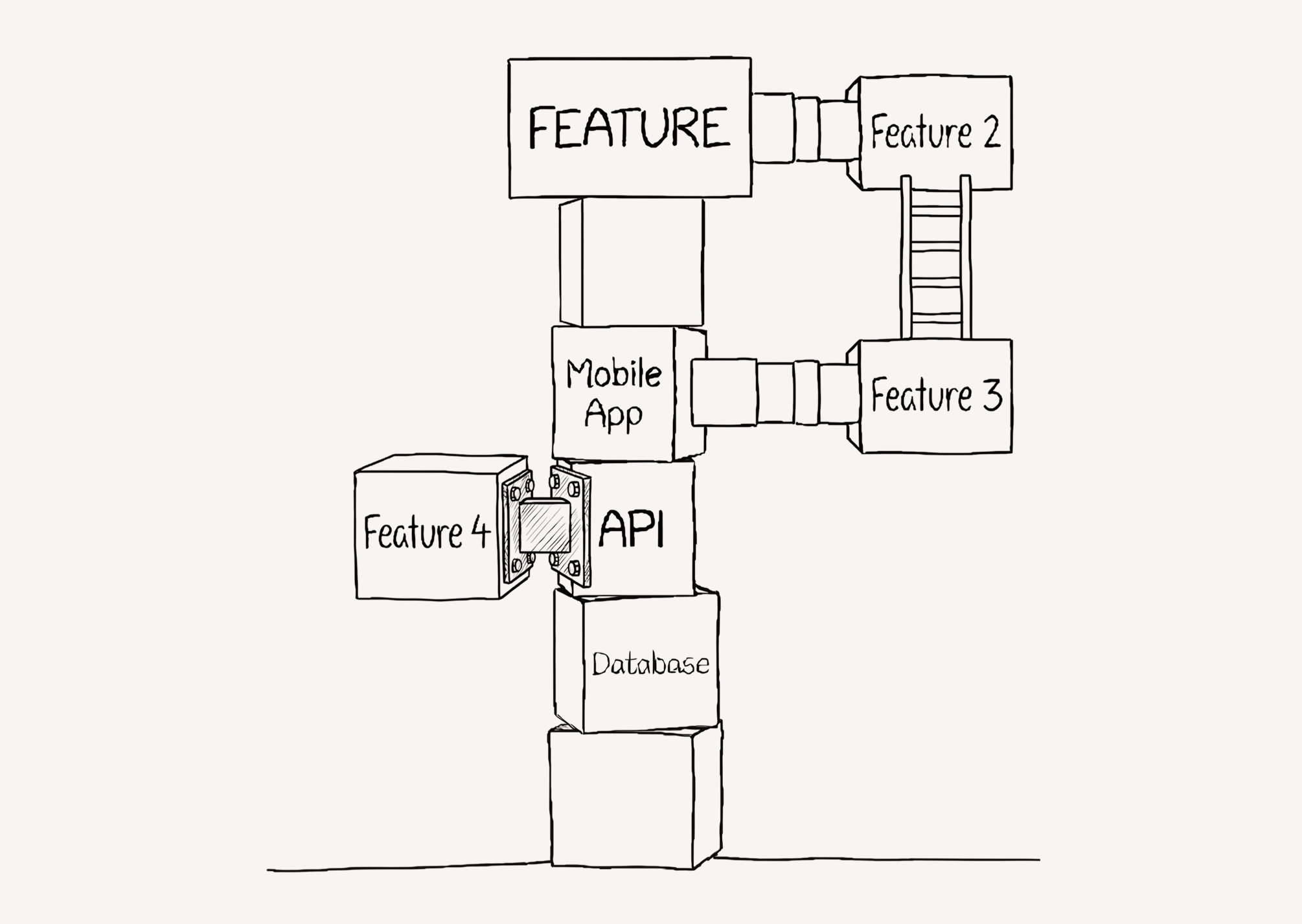

Unfortunately, their approach to adding on these features may lack the awareness to ask if their approach is the ‘right way’ or to involve the human to seek a better way.

Keep going down this path and the issues compound.

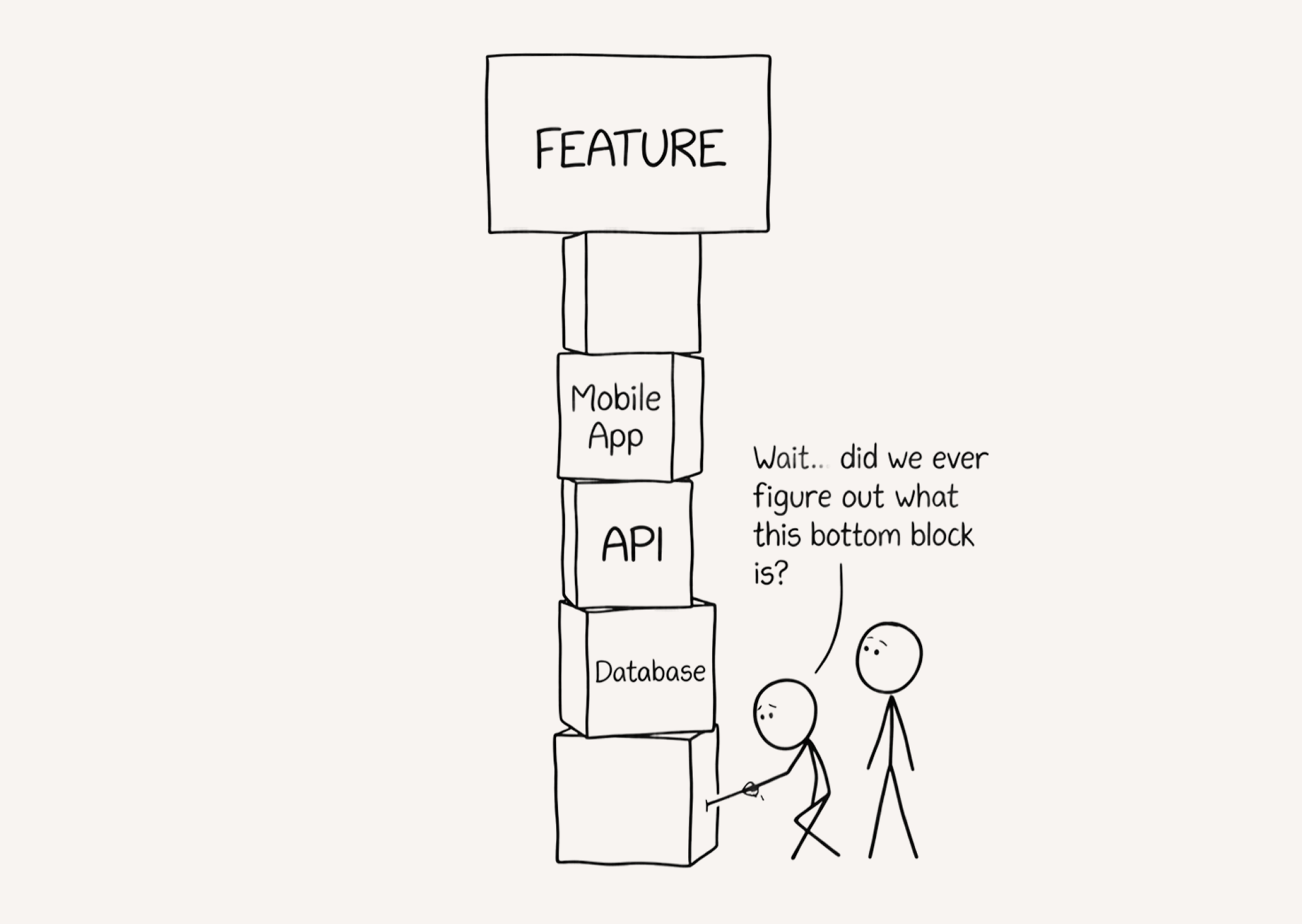

The hardest part of building software is there’s never a “right” way, but great software engineers ask anyhow. It always depends. It depends on the scale you’re building for, the timeline you’re building against, and the technical expertise you’re building with. It depends on the runway your company has, what your sales team can sell, and how you want your brand to be perceived.

An experiment in judgement

In my first job as a software engineer at Blue Bottle Coffee (circa 2019), management came to us with a request: “We want to build a mobile order ahead app”.

Did I know how to build mobile apps? No. Did I have related engineering experience that’d help me figure it out? Also no. Unfortunately, coding with LLMs was quite nascent so we were on our own.

It was a long, challenging, and rewarding 6 months going from 0 mobile engineering experience to launch and it was one of the most fun projects I’ve ever worked on. (action shot of me building this from this Square press release about the colab)

Given the arrival of our many-parameter coding buddies, it seemed opportunistic to evaluate how the real human built app diverges from what bleeding edge coding agents would build.

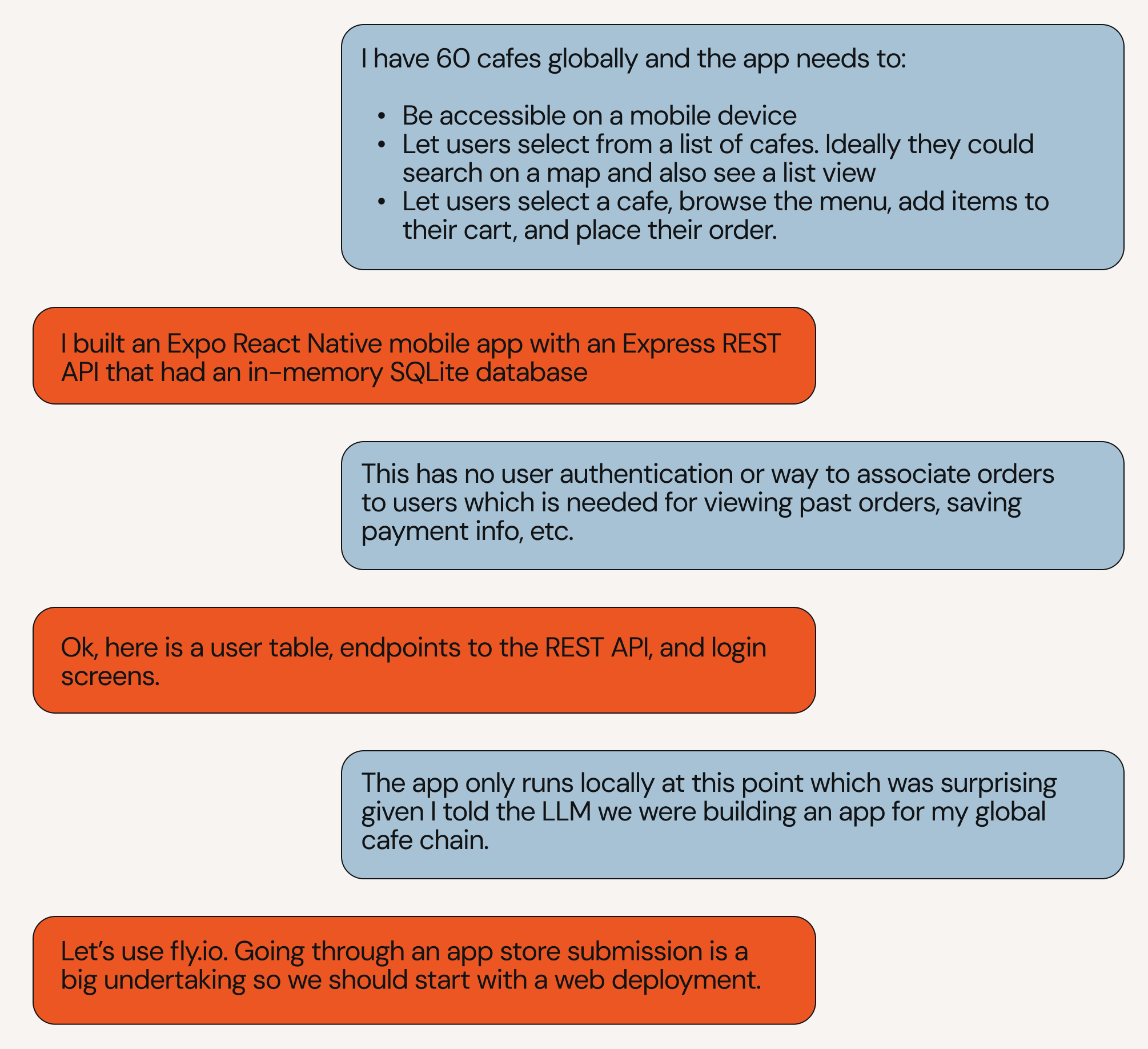

Here’s what unfolded:

I outlined what I needed to build and what I thought were the most important features. Any time it asked follow up questions, I answered as if I was back in 2019 starting the project and hadn’t already learned the lessons I did from building it the first time.

After my agent built the first version, I iterated back and forth with it on the things that were missing, guiding it towards something usable.

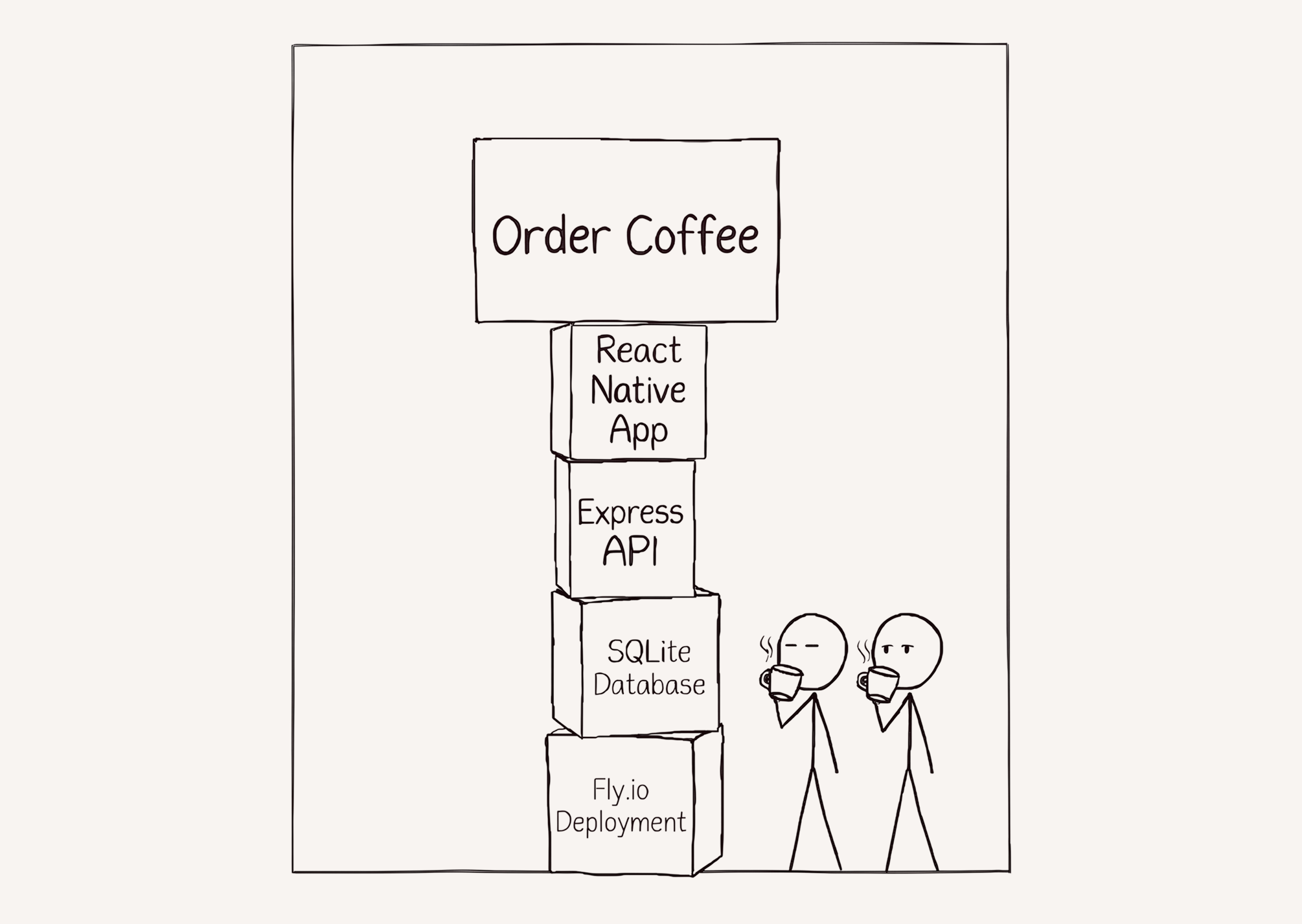

At this point we had a working app in production 🎉

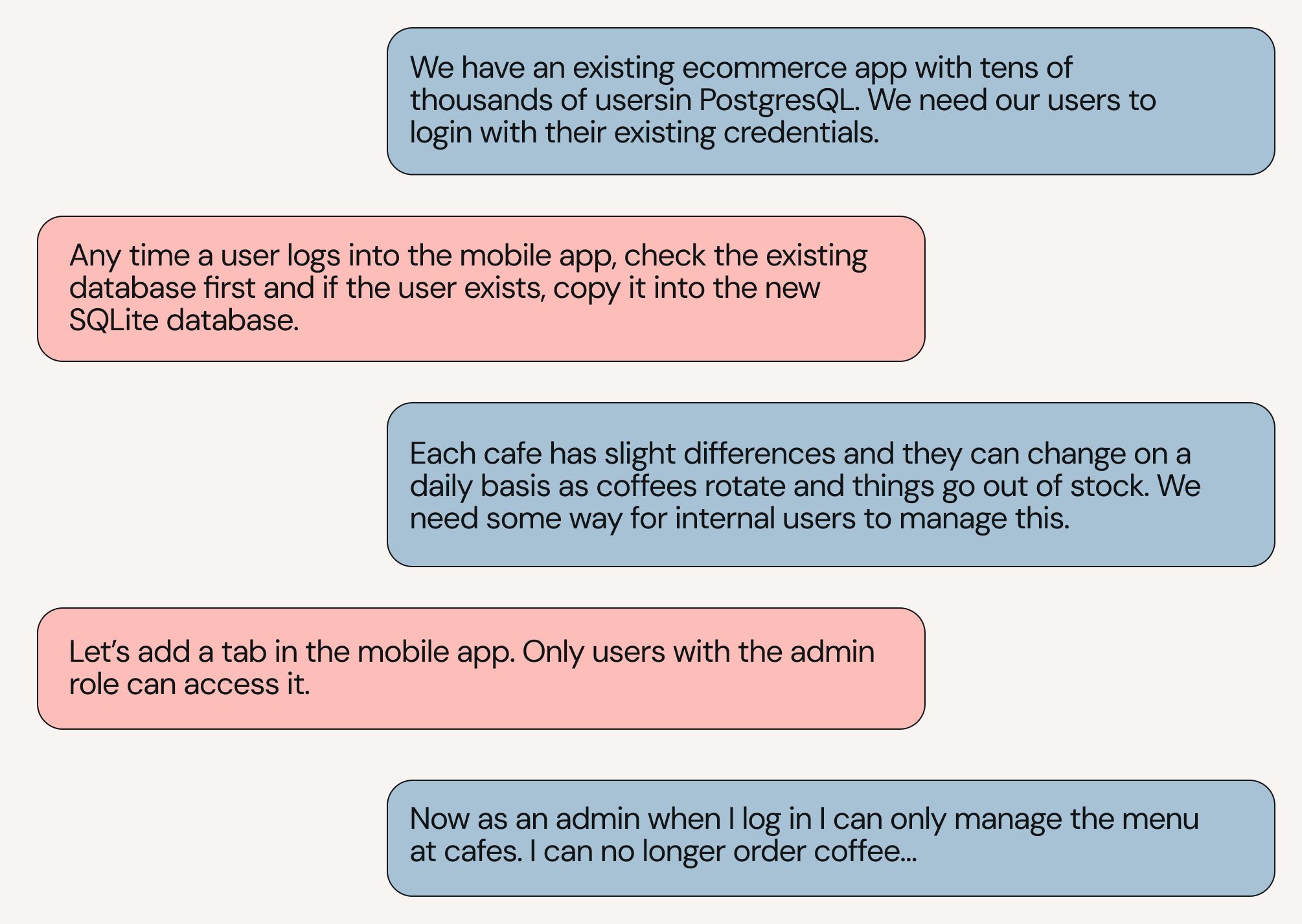

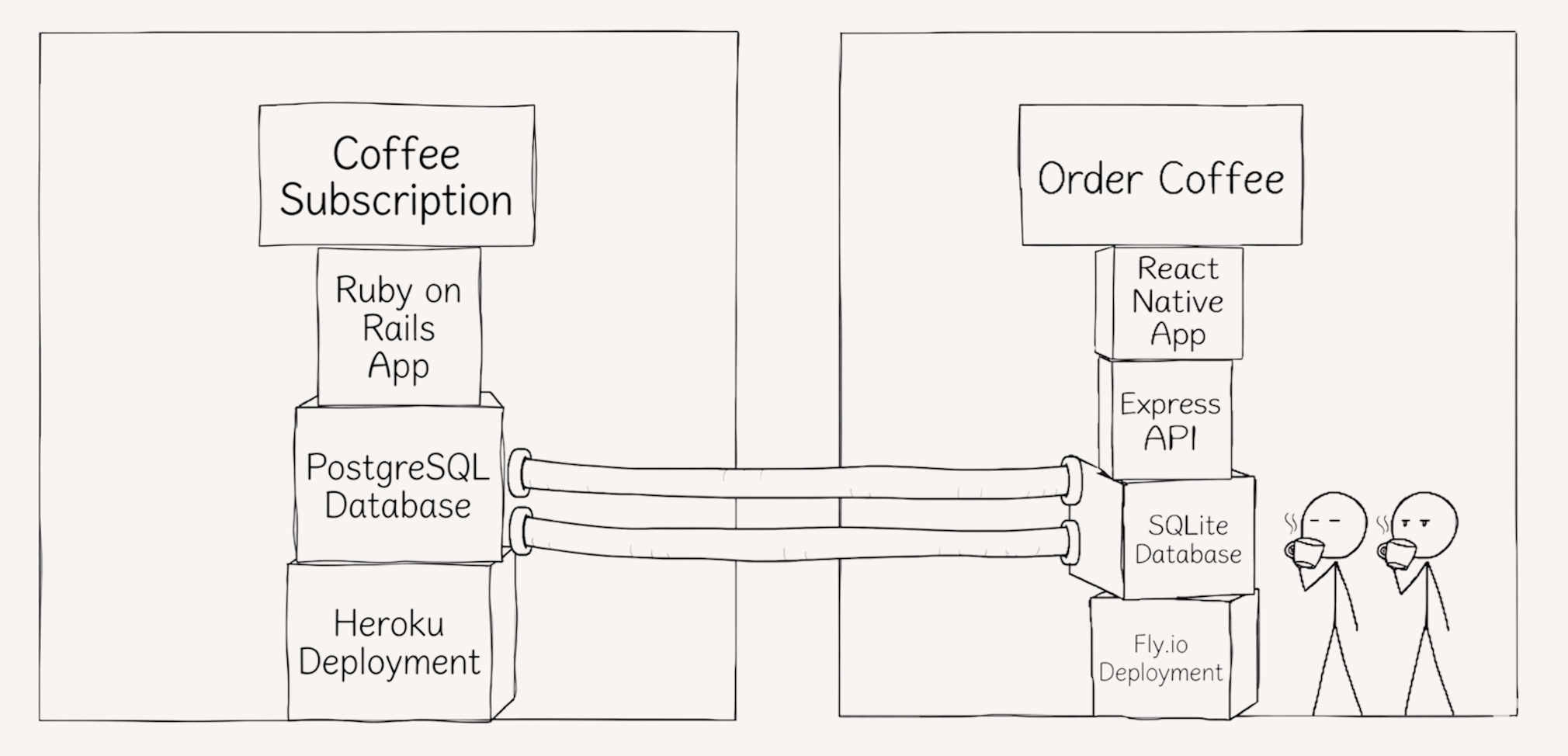

Unfortunately, there’s a problem brewing that we also encountered back in 2019. The LLM never once asked me if we had any existing code that overlapped with anything it built so it built duplicative systems.

It also never checked in to ask what types of users would be using the app. I need users with differing roles and permissions so they can keep the cafe menus up to date.

At this point I had a simple app that covered most of the core functionality we built back in 2019.

There are some architectural decisions I’m worried may be problematic later, like the user duplication across databases, but that’s a problem for a future agent.

There are also some usability issues like not being able to order as an admin or log out… I’m confident Opus could find a way around it but have a feeling we’d be heading down a deep rabbit hole.

Human software engineering, engineering human software

The architecture of this Opus-built app ended up significantly different from what we built in real life at Blue Bottle. At the time we felt the best approach was to build:

- A native Swift app

- A simple admin web UI

- A backend-for-frontend Express GraphQL API

Native Swift: At Blue Bottle, we thought long and hard about whether we should build a native Swift app or something cross platform like React Native. Ultimately, our brand was the most important thing so we felt like a native Swift app would give us the control we needed to make it pixel perfect. We were also starting to see some industry hesitation around React Native, including Airbnb sunsetting their React Native app.

Simple web UIs: When it came time to build an admin UI, we decided that speed of shipping changes here mattered the most. Given App store reviews can take weeks, it felt silly to gate admin functionality behind them. We needed to ship admin changes fast both for development speed and in case something went wrong so we built very simple web UIs for cafe leaders to manage their menus.

Backend for Frontend: Instead of building a siloed full stack application, we built a backend-for-frontend that connected to our existing Monolith. We already had a monolith we’d been working on for years and so building a separate system didn’t make much sense. The monolith had thousands of users who needed to login with existing credentials and we thought app usage would suffer if they couldn’t use existing login, payment methods, etc.

None of these decisions were strictly code decisions and none of them had a black and white “right” answer. They all considered some combination of existing technical infrastructure, skillsets of our team, priorities of the company, and where we thought the industry was going when we looked into our crystal ball.

Be a Tastemaker

For every software decision without a right answer, the more context LLMs have on the current state of things, the better decisions they make. They need to know things like:

- What’s the canonical way to write a class?

- How’s this service organized?

- What services already exist in the company’s architecture?

- What does the company’s roadmap look like over the next few days/months/years?

The answers to these questions are the ingredients of taste and it doesn’t matter how smart LLMs get, they’ll never build great software without taste.

Every time a human engineer adds a block to the tower, it’s important to answer: “Are the blocks under it stable?” and if not, “How should we re-arrange them such that we’re building on solid ground?”.

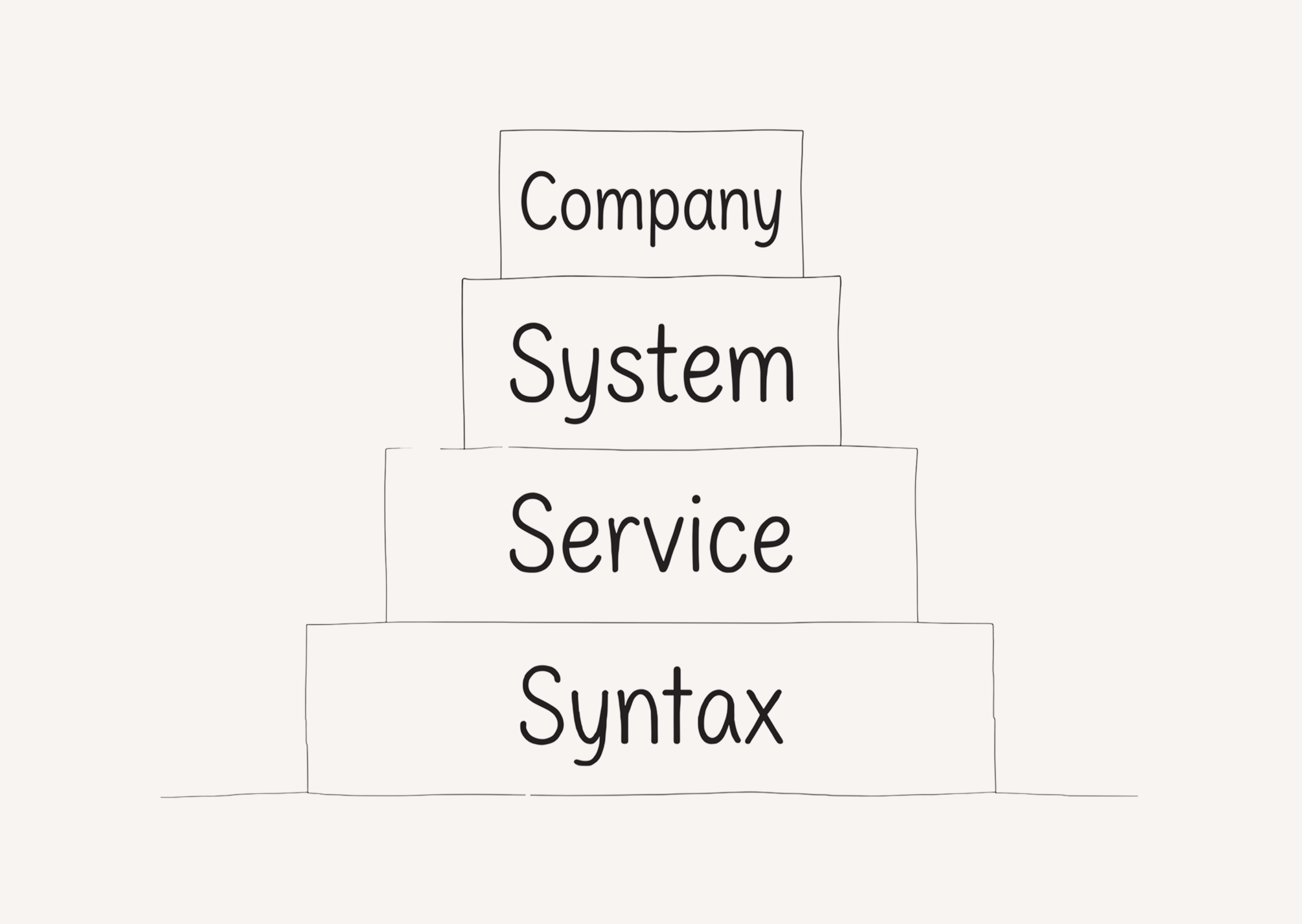

For LLMs to develop taste, they need to answer the same questions. That’s why I’m pleased to announce the newest framework for answering these questions: Conway’s Hierarchy of Agentic Implementation Needs™ (CHAIN).

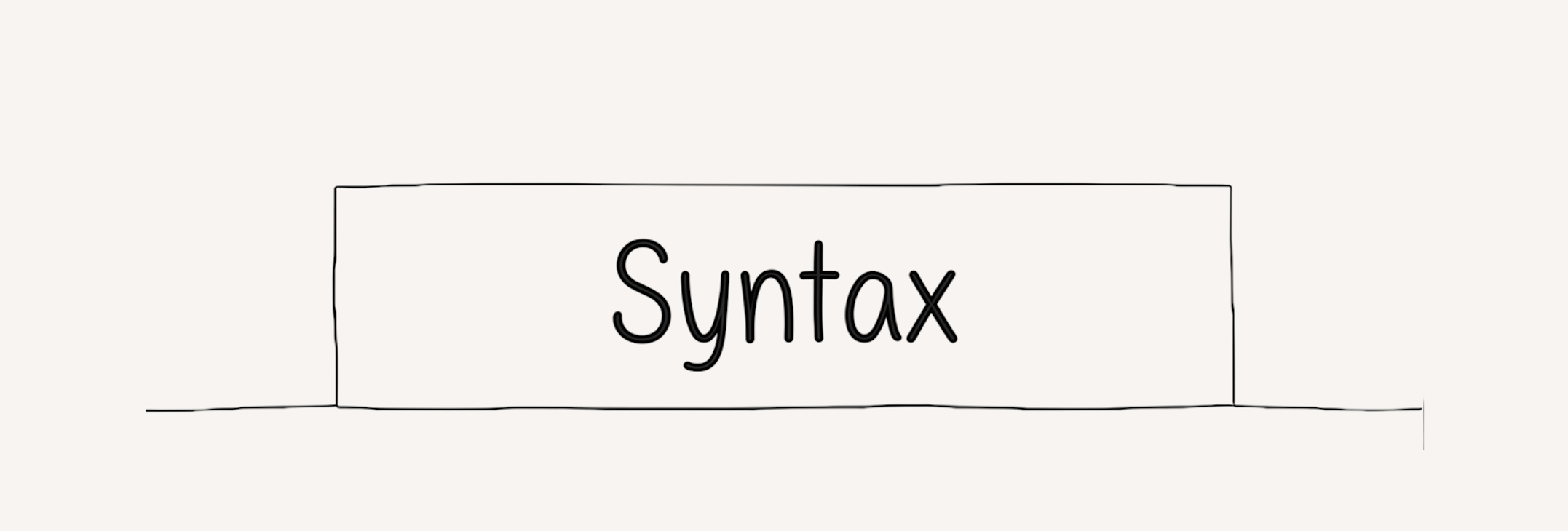

Syntax

Your LLMs need to know how to write code. SOTA models are great at this out of the box, but getting them to conform to your engineering best practices still takes some effort. With no standards in place, every LLM decision risks entangling your code base with patterns you never meant to add. When one LLM adds dependency injection, how will future LLMs know if that’s the pattern to follow or a one off exception?

Luckily, engineers are brimming with strong opinions on what’s best here. The hard part isn’t tooling, but agreeing on the standards is another thing.

There’s many battle tested tools in this space like linters and formatters so pick the ones most relevant to your tech stack if you haven’t already and make sure your LLMs know how to use them.

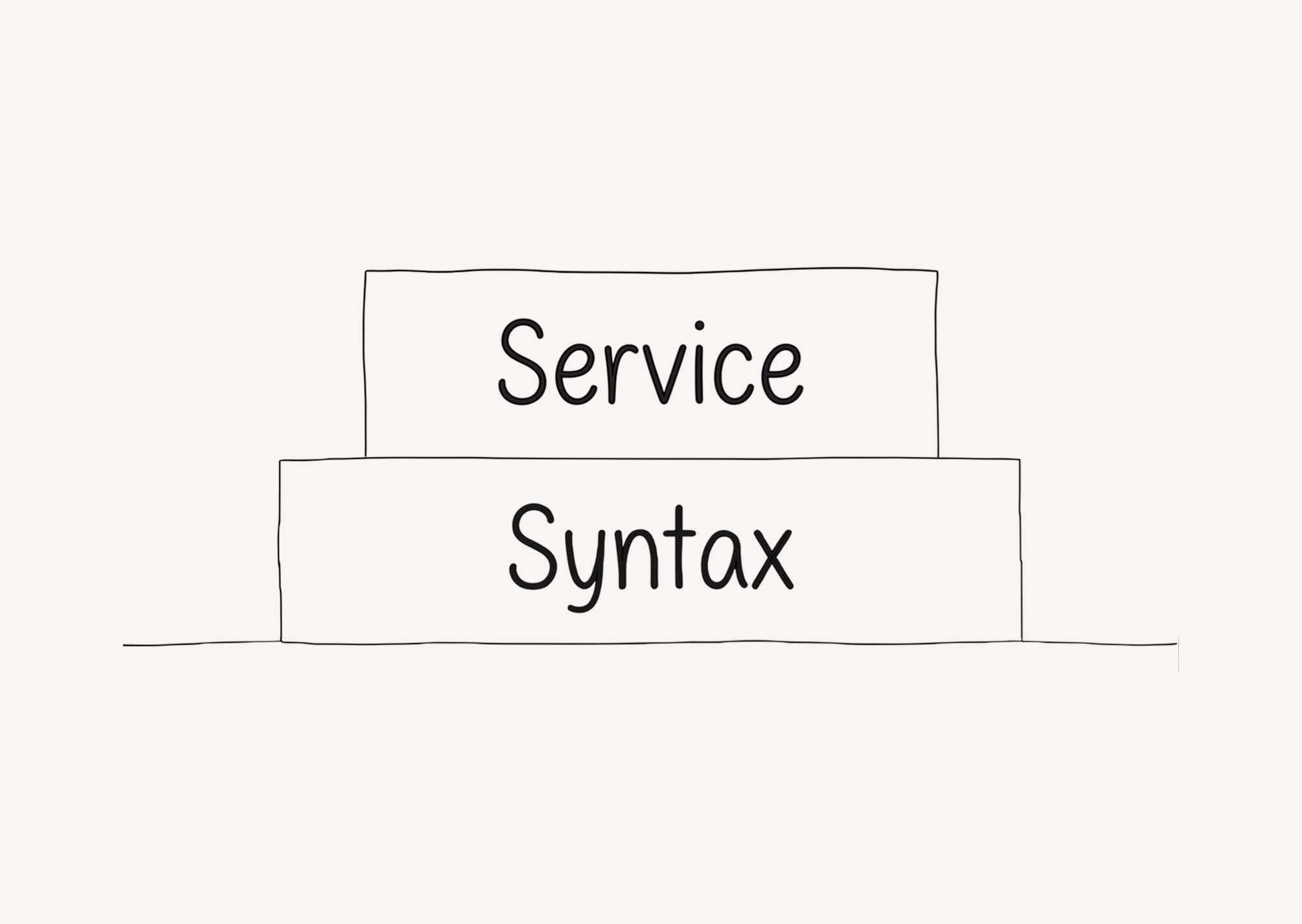

Service

Once your LLMs are reliably following your syntax rules, build upon it.

Your LLM needs to know service level concerns about the service it’s working within. These are things like:

- The architecture your service is aspiring towards.

- What domain(s) this service is responsible for.

- How this service is deployed.

Document service level information in a format LLMs can access. You need documentation that is both inward facing (LLMs can find information about the service it’s working within) and outward facing (LLMs can find information about other services in the system) for each service.

Pick the most relevant markdown flavor for your team’s IDE (.mdc, AGENTS.md, etc) and add anything that doesn’t neatly fit into an existing tool.

At Theory, we’re adding .mdc files that describe things like the “best practices for API design”. We’re also using Dosu to keep documentation automatically updated as code changes.

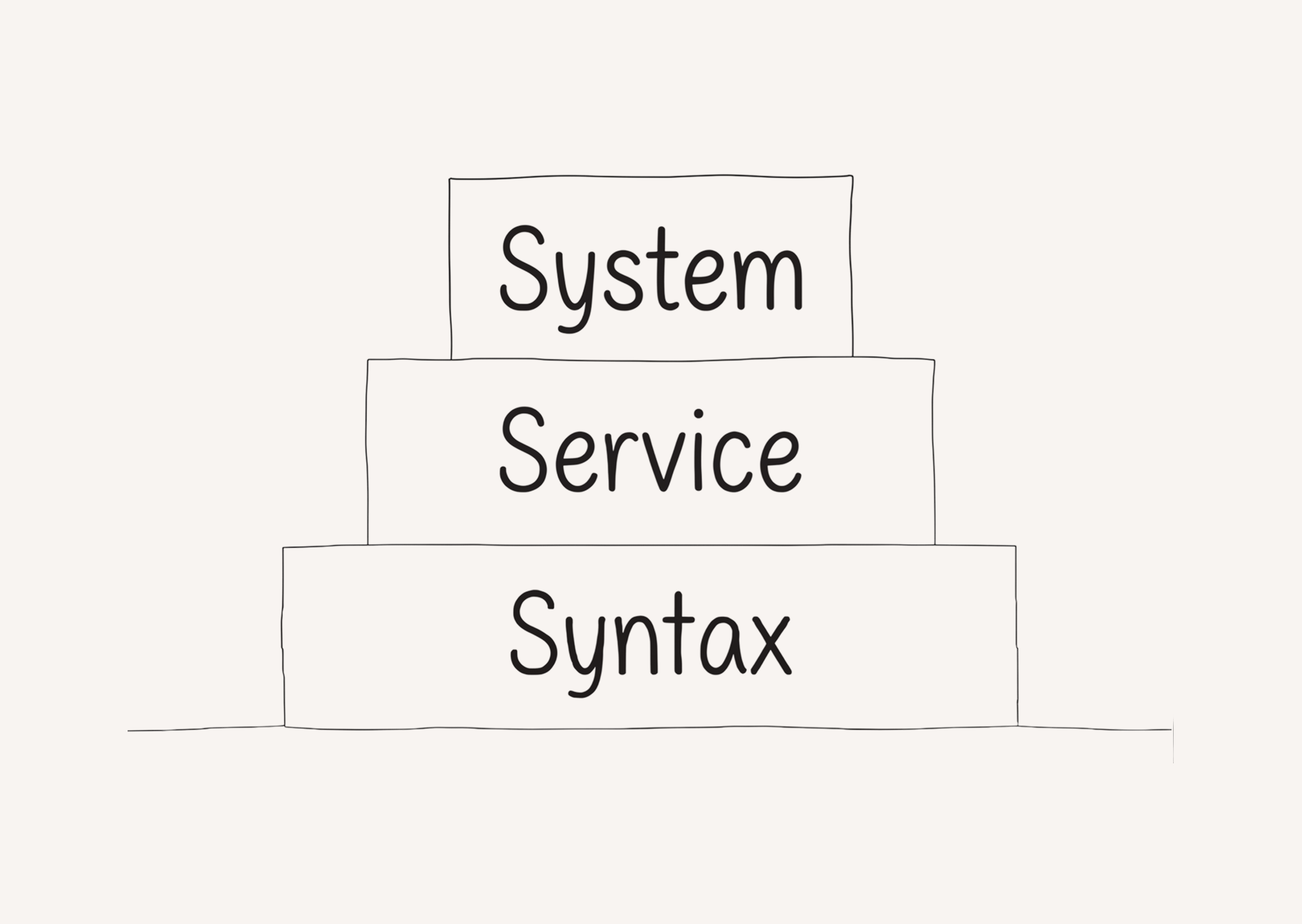

System

After your LLMs know how to reason through the syntax and service, the next most impactful thing is the system. Every software architecture is made up of connected boxes that impact each other. Service A makes requests to service B and both of them are deployed differently.

Coding agents need to know how the system works so they can build on it. Document system level information in a format LLMs can access. Here are some things We’ve found useful:

- Outward facing docs: Each service should have outward facing “here’s how to interact with this service” documentation. There’s often overlap with a service’s README but this should also include information about what concepts the service is responsible for.

- Architectural diagrams: Diagrams are useful for showing how the pieces all fit together. LLMs are great at parsing text so I recommend using Mermaid for architectural diagrams that can teach your LLM how your system works. Pro tip that they’re also great at generating these diagrams! Excalidraw is another great option here and they even have an MCP server I’ve had a lot of luck with.

- Third party tools: In addition to the software you’re building, LLMs need to know what third party tools you already use. It might be fine for your LLM to rebuild a CRM from scratch (SaaS is dead after all), but it’s better to do that intentionally instead of doing it because the LLM didn’t know you already had a CRM.

All of this system level documentation should be at the LLM’s fingertips and an MCP server is the best way to distribute it today. Choose a documentation manager that has an MCP server (like Notion or Dosu) and start documenting the system information most relevant to your LLMs.

Company

Last but not least is company information. This is less critical than all the building blocks before it, but it’s still useful.

When there’s multiple ways to build something that solves a problem, the tie breaker is often some non-technical company reality.

- How much time is left until this project’s due date?

- Is your company more focused on product-led growth or sales-led growth?

- Is the company going to run out of money in a month or does it have years of runway?

The answers to some of these can be found in existing systems, like a project’s due date in Linear, but some of them will need answering. Doing so requires buy-in across the org, but giving LLMs the answers will let it make informed decisions. Building on CHAIN won’t solve every challenge, but it will decrease the likelihood that your AI-assisted PR’s get labeled “slop cannon”.

Enabling Taste Driving Development

Conveniently, these levels of abstraction follow the same progression that every engineer goes through as they progress through their career. Moving through a focus on the code, the service your team owns, the system architecture, and finally your organization and your impact across it.

That means regardless of where you sit in an engineering org, you have a part to play in enabling Taste Driven Development. Pick the level of abstraction most relevant to your role and start shipping 🚢.

If you’re building Taste Driven Development, please reach out at adam@theoryvc.com!